In a nutshell:

- I created a Knime workflow — the TroveKleaner — that uses a combination of topic modelling, string matching and other methods to correct OCR errors in large collections of texts. You can download it from the KNIME Hub.

- It works, but does not correct all errors. It doesn’t even attempt to do so. Instead of examining every word in the text, it builds a dictionary of high-confidence errors and corrections, and uses the dictionary to make substitutions in the text.

- It’s worth a look if you plan to perform computational analyses on a large collection of error-ridden digitised texts. It may also be of interest if you want to learn about topic modelling, string matching, ngrams, semantic similarity measures, and how all these things can be used in combination.

O-C-aarghh!

This post discusses the second in a series of Knime workflows that I plan to release for the purpose of mining newspaper texts from Trove, that most marvellous collection of historical newspapers and much more maintained by the National Library of Australia. The end-game is to release the whole process for geo-parsing and geovisualisation that I presented in this post on my other blog. But revising those workflows and making them fit for public consumption will be a big job (and not one I get paid for), so I’ll work towards it one step at a time.

Already, I have released the Trove KnewsGetter, which interfaces with the Trove API to allow you to download newspaper texts in bulk. But what do you do with 20,000 newspaper articles from Trove?

Before you even think about how to analyse this data, the first thing you will probably do is cast your eyes over it, just to see what it looks like.

Cue horror.

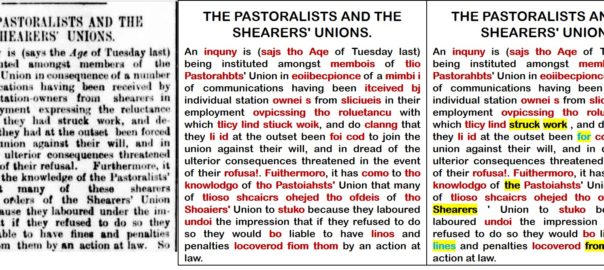

A typical reaction upon seeing Trove’s OCR-derived text for the first time. Continue reading TroveKleaner: a Knime workflow for correcting OCR errors